I’ve been writing for search for as long as I can recall. Long enough to have seen SEO move from something that felt vaguely mechanical into something that is now behavioural, probabilistic, and often difficult to reason about with certainty.

I also want to be clear about something before we go any further. Most of the writing I do today is not written for search at all.

It is written to explain ideas, to educate, or simply to be useful. You are reading this because that sort of writing exists.

What follows is different.

This is not about content marketing. It is not about blog posts, guides, or opinion pieces.

It is about money pages: landing pages, service pages, and product pages. Pages that exist to rank, to be selected, and ultimately to convert.

It is also about a growing misunderstanding.

SEO and what people now refer to as “GEO” are often spoken about as if they are the same activity, or at least as if one naturally extends from the other. They do not. They solve different problems, they operate under different constraints, and if you treat them as interchangeable, you will usually weaken both.

And with that warning out of the way, here's my 'surface-level guide' to writing for SEO and GEO.

Please understand, there's more to it than I'm showing here, but if you're stuck. It's a great starting point.

Modern SEO Is Not About the Page You Write

One of the biggest mistakes people still make is assuming that modern SEO is primarily about the document they publish. It is not.

Search engines do not rank pages in isolation.

They rank pages within a dense network of signals: links, mentions, historical performance, engagement, site structure, brand demand, and a large number of behavioural factors that are only partially observable.

In practice, modern SEO is about creating enough supporting signals in favour of a page that the page is allowed to compete for buyer intent rankings

This is why you will often see weaker landing pages outperform stronger ones. They are ranking despite the weakness, not because of it. And often they're ranking higher because of something you can't see.

For example, in local search, it's common to find agencies jumping ahead not because their service pages are better, but because they have recently generated signals elsewhere.

For example, many SEO agencies also build websites for clients. What happens when they launch a website for clients? They strategically ram their footer links: "SEO agency built by SEO agency X," "SEO agency Y," and so forth.

Google has always maintained that these shouldn't work, but in reality, they do. When you've just landed thousands of links like that, it does affect rankings. I've seen this time and time again. A lot that Google says doesn't work, does.

Equally, the same thing happens with the buying of links. Lots of people buy lots of links, and it does give short-term boosts. And sometimes those boosts last longer than they should.

I am not endorsing this. I am describing the environment.

The consequence of this environment is that on-page work must be efficient. You are not trying to create the perfect page. You are trying to ensure that when the wider system allows your page to compete, it is structurally aligned enough to win.

How I Optimise Pages for SEO Today: Step 1 (intelligence gathering)

Once keyword research is complete, the job is not to “write content”. The job is to understand what already performs and why.

I start with traditional tools like Ahrefs or SEMrush, along with a manual review of the search results. I am not interested in which page ranks first. I am interested in which page is generating the most meaningful visibility.

Very often, this is not the top result. It may be a page ranking lower that captures a wider spread of buyer-intent queries, brand-adjacent terms, or close variants. Those pages are usually far more instructive than the headline winner.

From there, I make sure I am comparing like with like.

A service page is compared with other service pages. A product page with other product pages. Comparing landing pages against blog content leads to the wrong structural decisions and wastes time.

I then extract the page assets.

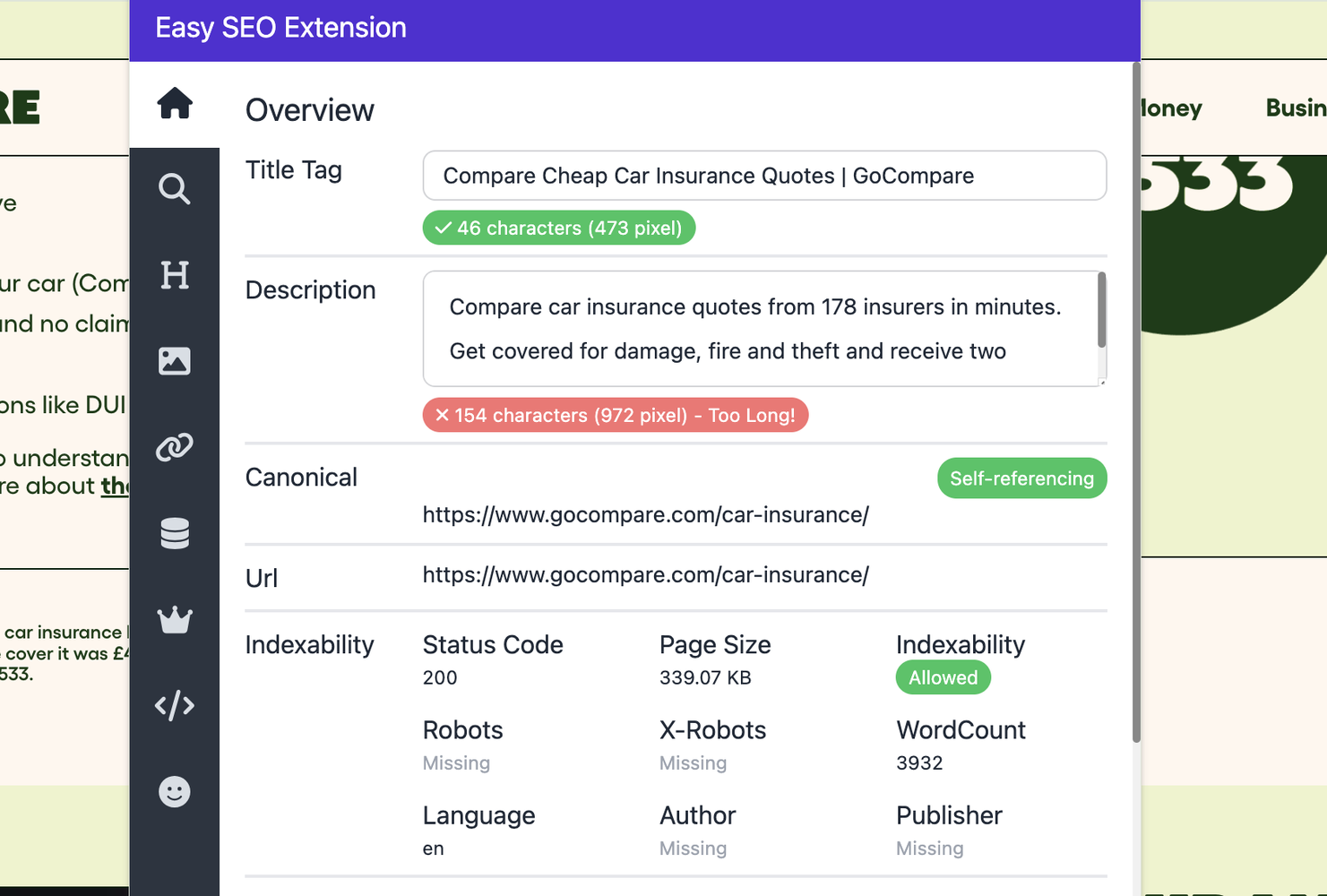

Using free tools such as the Easy SEO Chrome extension and Glen Allsopps Detailed extension, I pull titles, meta descriptions, heading structures, and visible content blocks from a small number of strong competitor pages, alongside the page I am working on.

Everything goes into a single working document.

At this point, I use ChatGPT to run a deep comparative analysis.

The important caveat here is that I do not treat the output as truth. I have tested most commercial content analysis tools on the market, and they are often wrong. Humans performing the same analysis are often wrong as well.

What I am collecting is not evidence. It is intelligence.

The purpose of the analysis is to surface patterns, omissions, and possible misalignments that I can then sanity-check against the real search results. Sometimes the insight is useful. Sometimes it is not. The value lies in prompting better questions, not in outsourcing judgment.

But this research document is the start of how I optimise all money pages now.

Step 2: Structural Alignment.

When I optimise the page itself, I follow a few principles consistently.

The target keyword is present in the page title, meta description, and H1.

That is non-negotiable for a page whose primary job is to rank for a specific term.

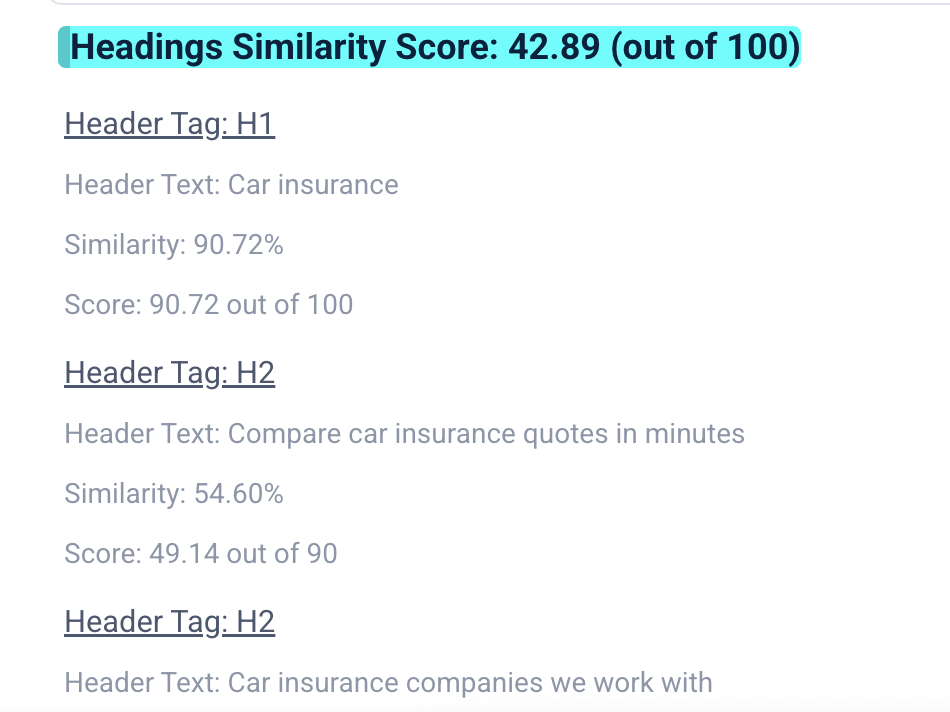

Beyond that, I pay close attention to heading alignment.

I am not looking for mechanical repetition, but I do want the majority of the page's headings to clearly relate to the keyword's core intent. Pages leak relevance when headings drift into loosely related or unnecessary subjects.

There is no hard science here. I've seen pages rank with a single H1 and no subheadings. Pages rank with messy heading hierarchies. But that does not mean alignment does not matter. It means SEO is noisy.

My aim is simply to give the page the best possible chance without making it read unnaturally.

I then break the page into information packages (not chunks). Chunking is something that large language models and Google do to a page. But I don't chunk the content itself. Content exists in information packages on a page.

The Easy SEO Chrome extension highlights subheadings, which allows me to see information packages more clearly on a page.

Mostly, there will be an information package underneath or as part of a page heading. Whilst some headings can stand alone as an information package with no extra data, the Easy SEO extension just allows me to see things more clearly on a page.

In truth, a single headline can be an information package (or sometimes referred to as a packet), or a short subsection at the footer can be an information packet. Essentially, an information packet is a self-contained unit of information that exists on your website.

Each section should explain one thing clearly and stand on its own. If I lift a section out of the page, it should still make sense to a reader without relying on what comes before or after it.

This is not about fashionable terminology like chunking. It's about clarity. Each sentence either earns its place or it should go. The reason for this is more obvious than you think.

Based on how AI and modern web search work, a user can find themselves in the middle of a webpage without any other context. So, it's important that your information packages can stand on their own and be understood, but equally, they relate to everything else on the page.

Once we have every information package finalised, we move to the next step.

Step 3: Information efficiency

At this stage, I often review the language more closely, looking at sentence structure and clarity to identify weak or vague passages. Tools can help highlight issues, but they are guides only. The goal is not to satisfy a linguistic model. The goal is to increase efficiency: to say more, more clearly, with less.

This is information gain rate.

Information gain rate comes from information foraging theory. A useful way of thinking about adapting information for high information gain rate is that human information interaction systems will tend to maximise the value of external knowledge gained relative to the cost of interaction.

Cognitive systems engaged in information foraging will exhibit adaptive tendencies, and they will tend to prefer technologies that maximise the value or utility of knowledge gained per unit cost of interaction.

This is fancy speak to describe that almost all systems will always look for optimisation of information gain rate.

Both humans, AI, and search engines are all looking to gain the most information from the least energy expenditure.

As such, the goal at this stage is to be brutal and edit with efficiency.

After which, you should have a page which is highly optimised for search.

The Risk Most People Avoid Acknowledging

One uncomfortable truth about modern SEO is that optimisation is inherently risky.

If you change everything on a page and rankings fall, you will not know which change caused the problem. If rankings improve, you will not know which change helped. This is especially true on high-value pages where multiple keywords and intents overlap.

This process works most of the time. When it fails, it is usually because of structural issues outside the content itself — CMS limitations, navigation elements polluting heading hierarchies, or the removal of sections that were quietly supporting secondary visibility.

SEO is not a controlled experiment. It is an iterative diagnostic process.

And it's hard.

The golden rule of SEO is that if a page is ranking on page 1, don't mess with it. Instead, simply build internal and external links to the page.

That said, in competitive sectors, you can generate significant rankings and revenue increases by testing page elements. Contrary to what many will tell you, a change of one header on a page can dramatically affect performance, both increasing it or decreasing it if you get the change wrong.

Delete the wrong package of information, and you can also have increased or decreased performance on a page.

This certainly wasn't always the case, and I think it has become more apparent as Google leverages natural language processing in its search algorithms.

As such, it's important that customers of SEO understand that SEO is not a game of solid rules, inputs, or outputs. We're dealing with artificial intelligence, algorithms and signal noise across many different ranking factors.

As such, what follows is my 'advanced' advice and learnings over the years.

It is a tiny amount of the knowledge I've gained, but it may help some of you. For more advanced information, and if you have a particularly difficult issue, you need to speak to me personally.

Advanced Lessons From Content Surgery in Competitive Sectors

But before we go further, I have never worked with any of the sites I'm about to display. I have simply come across them through my work.

I'm not giving away any client secrets here, but learning points that you can apply to your situations. For some of you, these lessons are obvious and you will already know them. For others, they will be new. It just depends on where you are in your career.

Lesson 1: Small H1

Having a highly targeted H1 does not mean it needs to be the biggest text on your page.

Yes, it's true that many CMS systems make it that way by default.

But a nice trick many competitive pages use is to shrink their H1s to make them much smaller elements.

This allows you to both optimise for search and optimise for conversion.

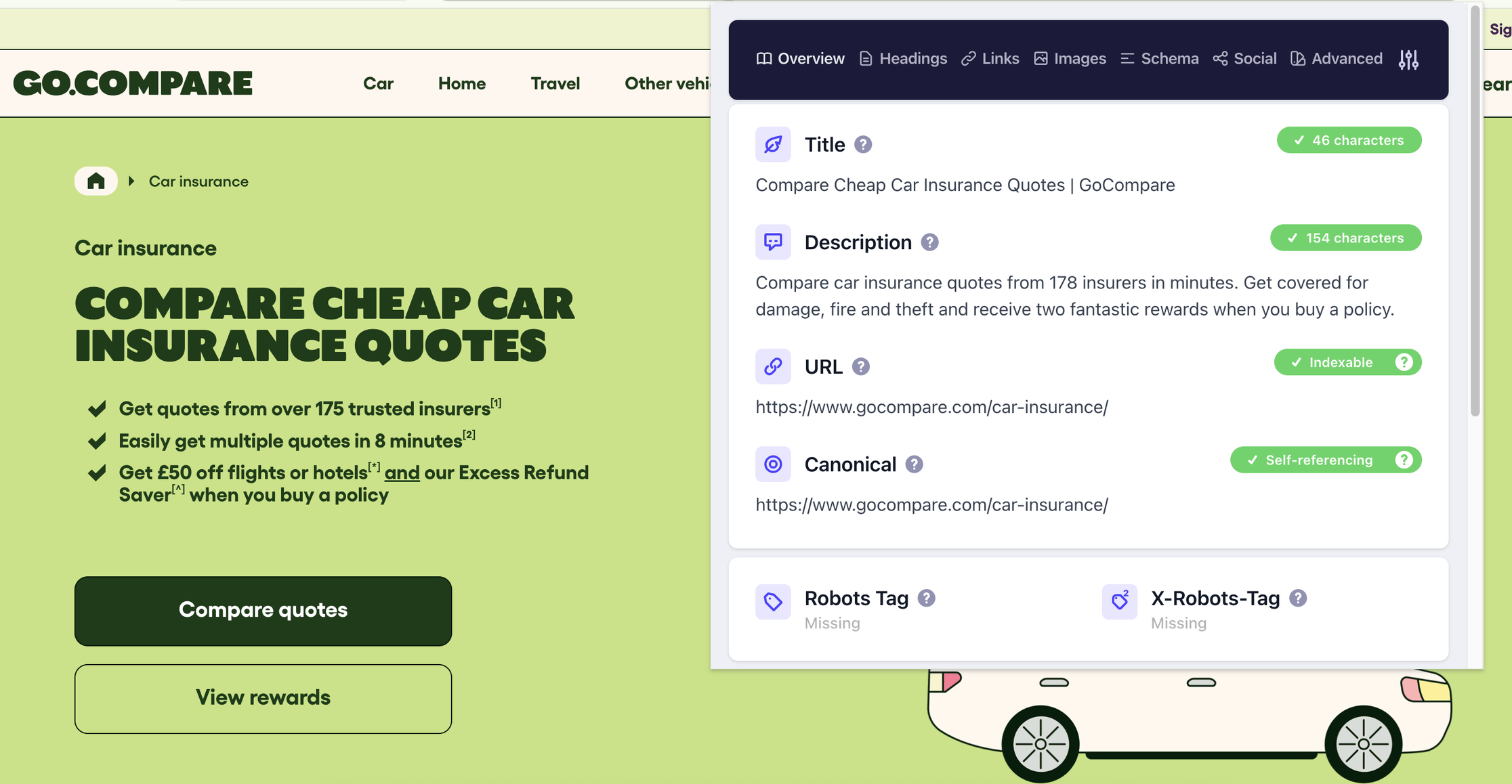

Lesson 2: Page Title Matches the Page Text

Another tip is to include your page title in the copy as early as possible, but not as a header.

In this example with Gocompare, they've got the broad H1 as car insurance because that is the big keyword. Then they've got their page title as compare cheap car insurance quotes.

And they've added that into the text directly below the H1 as 'paragraph text'

This is a really useful technique to ensure you're getting both the primary keyword (in this case, car insurance) and what the page allows someone to achieve through visiting. Which is likely attracting more 'defined search'.

This is a good GEO tactic too.

Lesson 3: Advanced Content Analysis

If you're responsible for a webpage that generates hundreds of thousands of pounds in revenue or a significant volume of leads for your business, I would always suggest you conduct some kind of advanced content analysis.

I've used all kinds of tools that are available. However, one of the best, is Market Brew AI.

This isn't an affiliate link, nor have I been paid to recommend Market Brew. And yes, I do know its owner, Scott Stouffer , but I'm genuinely impressed by Market Brew and how it's evolved as a tool.

Most content tools on the market are only designed for blog content. They do a terrible job analysing landing pages and category pages. If you're an in-house SEO, tools like this can be incredibly valuable.

You're gaining intelligence at a deep level around how a site is structured, how a search engine may be interacting with content, and you understand the mathematical benefits of certain elements of text on a page.

It will allow a deeper understanding of how search engines are reacting to the content.

Market Brew delves deeper than most tools, and I used it to gain a few wonderful insights I'll share now.

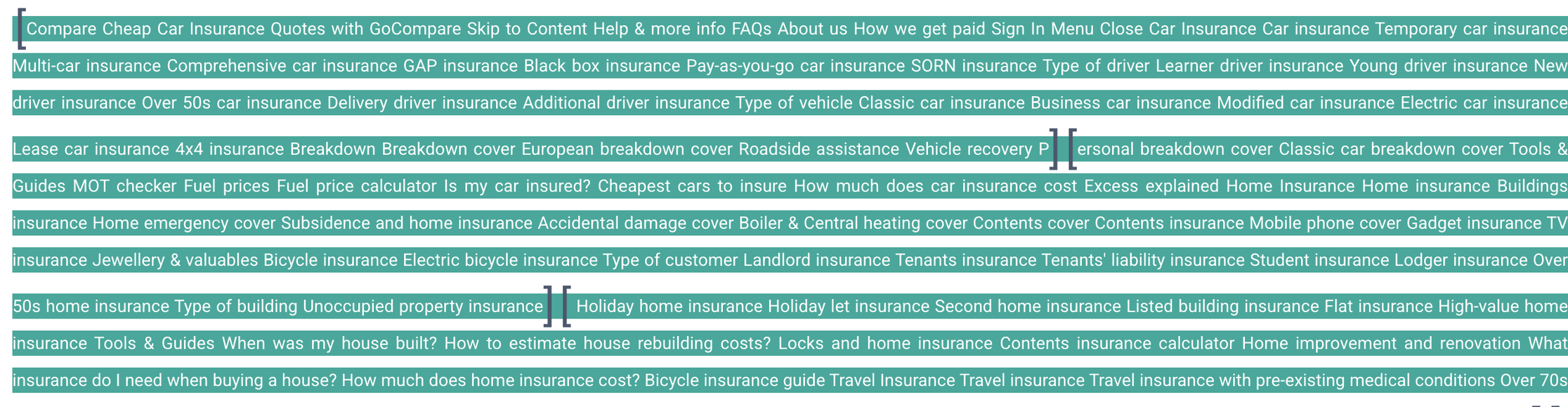

Lesson 4: Your Site Build Can Alter Search Performance

I've been analysing high-value web pages for a long time, and I can tell you one thing that is true. Your web build can alter your search performance.

In relation to this, I'm not talking about how fast your site is or whether your site's got structured data. I'm talking about the actual build of your site.

This is how your menu appears, how your menu is handled, and also how your page titles and headers work.

By using Market Brew you'll see that a lot of menu structures are pulled into the tool.

This is because of your site's DOM.

Think of the DOM (Document Object Model) as the skeleton or the "blueprint" of your webpage. While you see a visual design, the browser sees a tree-like map where every heading, image, and menu link is a "node" on a branch.

In the DOM tree, your menu is typically a dense cluster of links. From a structural standpoint, it’s a high-traffic intersection. If your site’s architecture is "flat," the menu might be just another branch. If it's "nested," the menu might sit at the very top of every single page's branch, effectively "shading" the unique content below it.

Modern AI doesn't just read your words; it vectorises the page. It turns your entire DOM structure into a mathematical coordinate in a multi-dimensional space.

If your menu DOM is massive and contains links to 50 unrelated topics, it creates "noise" that can dilute the specific topic of your page. The AI might struggle to figure out if your page is about "Car Insurance" or just a general "Money Saving" hub.

And if your most important header is "trapped" inside the same DOM container as your menu, an AI trying to filter out "navigation noise" might accidentally throw the baby out with the bathwater—de-weighting your main topic because it thinks it’s just part of the site-wide menu.

I've seen this more often than not at the moment.

In fact, a lot of site performance, positive or negative, might literally be down to how the site has been built.

The thing is, finding this kind of stuff out doesn't happen through some random SEO analysis or even an expensive audit by a top-class firm.

You need to hire somebody exceptionally talented with an investigative mindset and give them the freedom to roam, test things out, speak to people, and consult with tech specialists and developers themselves.

And I've seen these problems at all kinds of levels, from legal service clients to large, highly competitive sectors and industries.

Because search is so heavily leveraged using AI and natural language processing these days, we're entering into a new world where the way a site is built is absolutely key to your organic and AI search performance.

But more so, the way a site is structured and built may be absolutely crushing every SEO attempt you are making to increase the rankings.

And no, these things aren't easily visible, though AI is quite good at finding and hunting them down.

When I'm brought in to do advanced consulting with larger clients, it's these kinds of things that I'm considering and looking for.

Lesson 5: Sentence Structure Matters (More Than Ever)

Sentence structure has always mattered in SEO, but its importance has increased as search engines have become more reliant on AI and natural language processing.

At a basic level, clear sentence construction helps search engines understand what a page is about. Simple, well-formed sentences make relationships between ideas explicit, reduce ambiguity, and improve the overall efficiency of a document.

This is why subject–verb–object sentence structures still matter.

They are not mandatory, but they provide clarity. They make it obvious who is doing what, and why it matters. When pages drift too far into abstract, indirect, or overly complex sentence styles, meaning becomes harder to extract.

That said, not every sentence needs to follow the same structure.

Different sentence styles also have applications with natural language processing and alter how natural language processing systems consider text.

Understanding how sentence structures impact Large Language Models (LLMs) requires looking at two factors: attention mechanisms (how the model weights specific words) and probabilistic sequencing (how likely a word is to follow another based on training data).

While LLMs are much better at handling complex syntax than older models, "information density" and "positional bias" still play a huge role in how they interpret your meaning.

I've listed a few sentence structures below, all of which you can use and experiment with to ensure your page copy flows well and is easier for natural language processing to understand.

Preposing: Moving non-initial elements to the start of the sentence.

Postposing: Delaying complex elements to the end of the sentence.

Inversion: Reordering the typical subject–verb structure, such as fronting adverbials or using negative constructions.

Existential Clauses: Introducing new entities into the discourse (e.g., “There is...”).

Extraposition: Shifting complex clauses (e.g., moving a heavy subject to the end with adummy subject “it”).

Dislocation: Separating referents with comma-separated phrases (left or right dislocation).

Clefts: Partitioning sentences into focus and presupposition segments (e.g., “It is X that...” or “What Y wants is...”).

Passive Voice: Reordering sentences to emphasise actions or recipients over the subject.

Both search engines and large language models rely on modern AI systems that interpret meaning, not just keywords.

Poor sentence construction does not just weaken traditional relevance signals; it also makes pages harder for generative systems to summarise, interpret, and recommend.

The takeaway is simple: write with structural clarity.

Use different sentence styles when they serve a purpose, but default to clarity over cleverness. Pages that are easy for machines to understand are usually easier for humans to understand as well, and that now benefits both rankings and recommendations.

Okay, so that's an overview of optimising content for SEO. Let's move into GEO.

What We Are Actually Doing When We Optimise for Generative Engines

To understand why this approach exists, you have to accept one uncomfortable truth about search.

Keywords are not problems. They are approximations of problems.

Traditional SEO has always been document-led. We take a keyword, infer intent, and then optimise a page to match how we believe someone would describe their own problem in a search box. That logic still works, but it has a ceiling, and that ceiling is now visible.

Large language models do not work from keyword abstractions. They work from problem descriptions.

When someone uses a generative system, they are not defining themselves with a keyword. They are describing a situation, a constraint, a frustration, or an outcome they want to achieve. The model’s job is not to retrieve documents that match a phrase. Its job is to recommend solutions that appear to resolve that situation.

This is why optimising pages for generative engines requires a different mental model.

We are not trying to rank documents. We are trying to make pages/ products/ brands/ services legible as solutions.

From Document-Based Logic to Problem-Based Logic

Search engine optimisation is fundamentally document-based.

Even with all the advances in machine learning, the core task is still the same: take a query, retrieve documents, and rank them by relevance signals. The document is the unit of competition, and the keyword is the organising principle.

Generative systems operate on a different axis.

When a large language model evaluates your page in a buying or evaluation context, it is not assessing topical coverage. It is assessing problem–solution fit.

Can this 'entity' be confidently described as a solution to the problem the user is describing?

If the answer is unclear, the entity is unlikely to be recommended, regardless of how well it performs in traditional search.

Why This Escapes Keyword Fatigue

Keyword-led optimisation assumes that people define themselves consistently.

They do not.

Two people with the same problem will often describe it in completely different ways. One might search for a product category. Another might describe a symptom. A third might explain an outcome they are trying to avoid. A fourth might not know what to call the problem at all.

Traditional SEO forces you to choose which of those people you want to serve by choosing a keyword.

Problem-based optimisation does not.

By making the page explicitly about the problem being solved, who it applies to, how it is solved, and why this solution exists. You increase the likelihood that the page is relevant across many ways of describing the same underlying need.

This is the strategic advantage.

You are no longer dependent on how someone labels themselves with a keyword. You are aligning the page to the reality of the problem, not the vocabulary used to describe it.

In generative environments, this matters far more than perfect keyword matching.

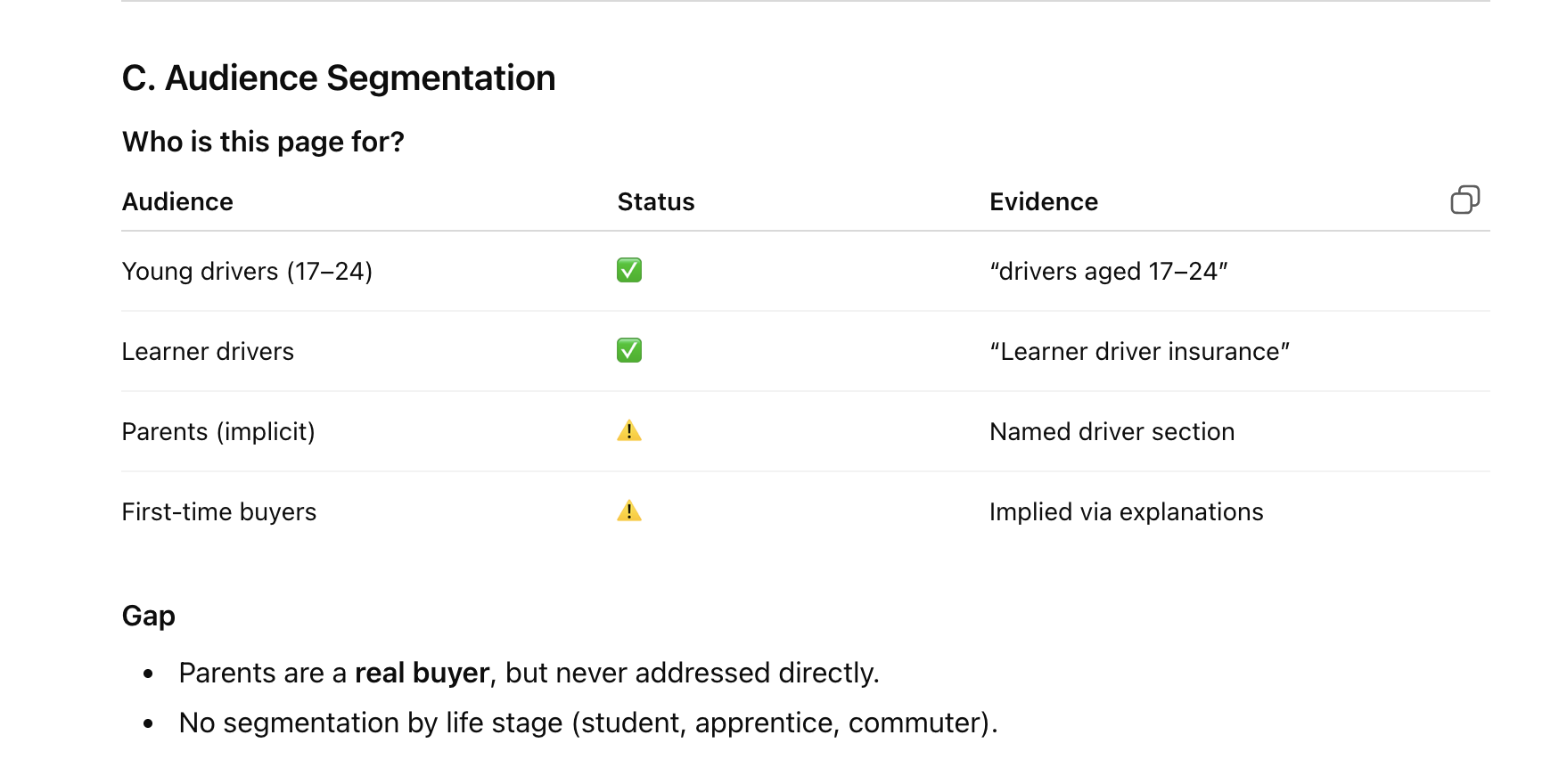

How to Optimise a Page for GEO

When I optimise a page with generative systems in mind, I am not adding more content. I am not broadening coverage. I am doing the opposite.

I am removing ambiguity.

Specifically, I want the page to make the following things unmistakably clear:

- What this product, service or business is.

- What problem does this product or business/ page solve?

- Who they solve the problem for?

- How do they solve this problem?

- How do they solve this problem better than their competitors?

- Proof they have or can solve the problem.

If any of these are implied rather than stated, the page becomes harder to recommend.

It's why this work looks closer to positioning and sales logic than to traditional SEO copy. It is not about satisfying a crawler. It is about making the page easy to summarise as a recommendation.

When it comes to optimising pages for generative engine optimisation, I now use a simple process.

It's a decidedly simple framework for establishing a brand's 'on-page' positioning.

Step 1: extract the page's content, including the page title and meta description.

There are a few tools and a few ways to do this. Essentially, though, copy-and-paste is arguably the fastest.

Step 2: Use AI to analyse the page

You will be shocked at how much you can gain from analysing a page using AI with a simple prompt.

A prompt in ChatGPT or Gemini will yield many insights into how AI views the text on the page, and, indeed, whether the core positioning is clear.

You must look for evidence that all positioning elements are satisfied.

And also look for weaknesses.

Step 3. Page improvement strategy

Once you've isolated that the page's positioning is weak, not aligned, or not explicitly addressed, you can start fixing it.

It would be relatively easy to dump all this on AI and ask it to fix it for you. However, at this stage, I think it's clear you need a human in the loop to ensure that the page is absolutely positioned perfectly.

That person will be an experienced and trained copywriter.

And here's how I suggest you work with a copywriter.

When GEO Meets SEO

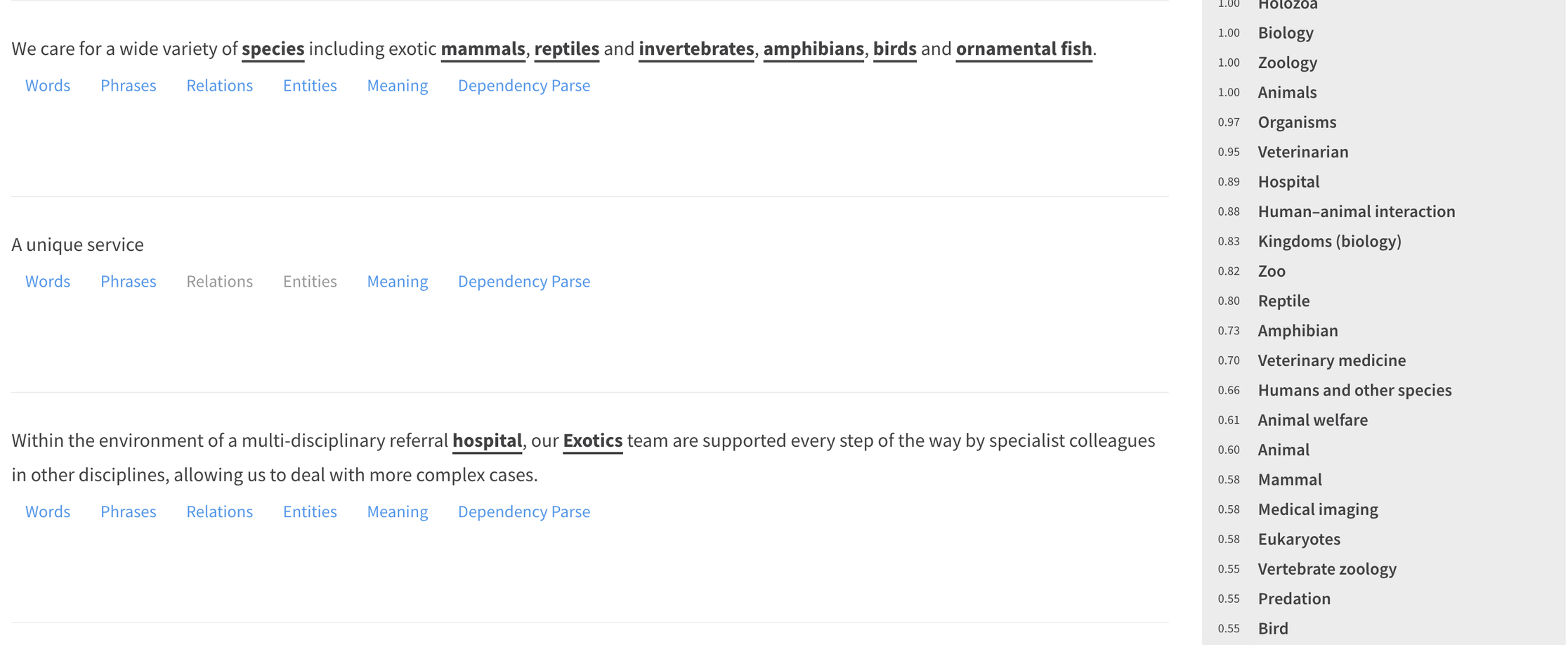

One of the best ways to work with a copywriter and indeed to have GEO and SEO meet together is to dump all the content from your page into a tool such as TextRazor.

Once TextRazor has done its analysis on the webpage, you'll have every single sentence of the text, including all the entities, and you can copy and paste that directly into a Word document or a Google Doc.

Conveniently, all the entities will be bolded for you in the text, and you'll have every single sentence highlighted and blocked out.

This gives any freelance copywriter and SEO a collaborative space to work on the text together.

Here you can ask a talented copywriter to improve the copy and page positioning, whilst you, as the SEO, can maintain control over the essentials for SEO.

The roles here are very clear.

You, as the SEO, have to maintain search engine optimisation of the page.

The copywriter's job is to improve the page's positioning in relation to the problem it solves and a brand's or product's performance characteristics.

It's here that you will be at the best of both worlds: the search engine optimisation skills combined with the product copywriting skills of an experienced and trained copywriter.

The SEO can look at if the page is leaking intent, weakening search signals, while the copywriter works from a strengthening position.

But overall control of the page needs to be held by the SEO. It is the SEO who decides exactly how this page will be represented in search, because it's search that carries, at this time, the biggest bang for your buck.

That might not always be the case, but for now it is.

A Word of Warning

To finish, I need to be clear about what this article is, and what it is not.

This has been a surface-level approach to both search engine optimisation and generative engine optimisation. It is not a complete guide to either discipline. There is significantly more depth, nuance, and experimentation happening in this space than can be covered in a single article.

What I have tried to do here is explain how on-page thinking needs to change, and why treating SEO and GEO as the same thing will eventually hold you back.

As generative engine optimisation becomes more prominent, and as Google continues to embed AI more deeply into search and discovery, the centre of gravity will continue to shift.

The brands that win will be the ones that are clearly positioned to do specific things for specific people with specific problems, and that can clearly evidence how they solve those problems better than anyone else.

In that environment, positioning becomes the dominant force. Brand clarity, product clarity, and proof will matter more and more. Traditional search optimisation will not disappear, but it will increasingly play a supporting role rather than the lead.

Everything I have covered here relates only to on-page SEO and GEO.

The off-page world, links, digital PR, brand demand, distribution, authority is far broader and far more influential. But what we cannot afford to do is pretend that GEO is simply “SEO renamed”.

It is not.

As I’ve said from the beginning, SEO is about rankings. GEO is about recommendations.

SEO is about optimising for how humans use search engines to find answers. GEO is about increasing the likelihood that your brand is suggested when someone is deciding what to buy or who to use.

Yes, there is overlap. But the objectives are different, and the thinking must be different.

My advice to SEOs is simple: lead this conversation. Understand the distinction between retrieval and recommendation. Help your clients and your organisation navigate it sensibly, rather than reacting defensively or dismissively. The people who define the language and the frameworks early are the ones who shape how this evolves.

There is a real danger in saying GEO is “just SEO”. There is an equally real danger in assuming SEO alone can solve every problem.

In reality, GEO is a collaborative channel. It sits at the intersection of SEO, digital PR, paid media, social, copywriting, and brand strategy.

When those disciplines are aligned around increasing the likelihood of recommendation — not just visibility — the results are stronger, more defensible, and easier to justify at the board level.

That is where this is heading.

And the sooner we stop arguing about labels and start designing for how decisions are actually made, the better positioned we will all be.

Andrew Holland